Getting Started

Install and configure Pritunl Cloud

Pritunl Cloud is a distributed private cloud server written in Go using Qemu and MongoDB. This documentation will explain installing and running Pritunl Cloud on a local server. This tutorial will detail configuring Pritunl Cloud on a Oracle Linux 9 or Ubuntu server.

Hardware Virtualization Support

If the host CPU does not support hardware virtualization such as when running Pritunl Cloud inside a virtual machine the Hypervisor Mode must be set to Qemu. Some virtual machine software allows enabling virtualization support in virtual machines as documented below.

Cloud Comparison

Running an on-site datacenter for production resources isn't a realistic option for most companies but development systems that do not need high availability will often benefit from an on-site solution. Development instances are often run on slower instances to reduce costs which leads to slower development time as developers wait for software to compile and start. An on-site Pritunl Cloud platform can be built for a fraction of the cost of equivalent cloud instances. This will give developers low cost, fast and secure access to on-site instances for development use.

-

Lower Costs

Running a small on site cloud platform for development instances can significantly reduce cloud costs. -

Faster Development

Development speed will improve as developers will have on-site low latency access to high performance instances that don't share oversold resources. -

Improved Security

Pritunl Cloud can be configured on the private company network preventing accidental data leaks from misconfigured firewalls.

Managed Switches

The Pritunl Cloud VPC design uses VLANs for VPC networks. Any managed switches that are VLAN aware or control the routing of VLANs will need to use the VXLAN network mode in the Zone.

Install

Below is a list of supported operating systems for the Pritunl Cloud host.

- Oracle Linux 9 (Recommended)

- AlmaLinux 9

- Ubuntu 22.04

Configure Bridge Interfaces (Optional)

Pritunl Cloud will use MAC address based bridging on existing network adapters. Optionally for more advanced configurations a bridge interface can be configured.

First remove any existing network configurations.

sudo nmcli con

sudo nmcli con delete <CONN_NAME>The code below will configure an interface to the bridge pritunlbr0. Each bridged should be named pritunlbr ending with a number. A bridge should be configured for each individual interface, bonding is not necessary, Pritunl Cloud will balance instances between multiple interfaces. The first set of commands will configure a DHCP interface the second will configure a static interface.

Replace$IFACE with the interface name on the server. Replace $MTU with the network MTU. The configuration should be verified before restarting the server to prevent loosing connectivity.

sudo nmcli con add type bridge ifname pritunlbr0

sudo nmcli con modify bridge-pritunlbr0 ipv4.method auto ipv6.method auto 802-3-ethernet.mtu $MTU

sudo nmcli con add type bridge-slave ifname $IFACE master pritunlbr0

sudo nmcli con modify bridge-slave-$IFACE 802-3-ethernet.mtu $MTU

sudo nmcli con up bridge-slave-$IFACE

sudo nmcli con up bridge-pritunlbr0Replace$IFACE with the interface name on the server. Replace $IP with the IP address of the interface. Replace $CIDR with the subnet CIDR. Replace $MTU with the network MTU. These files should be verified before restarting the server to prevent loosing connectivity.

sudo nmcli con add type bridge ifname pritunlbr0

sudo nmcli con modify bridge-pritunlbr0 ipv4.method manual ipv4.addresses $IP/$CIDR ipv4.gateway $GATEWAY 802-3-ethernet.mtu $MTU

sudo nmcli con add type bridge-slave ifname $IFACE master pritunlbr0

sudo nmcli con modify bridge-slave-$IFACE 802-3-ethernet.mtu $MTU

sudo nmcli con up bridge-slave-$IFACE

sudo nmcli con up bridge-pritunlbr0Install Pritunl Cloud

Connect to the server and run the commands below to update the server and download the Pritunl Cloud Builder. Check the repository for newer versions of the builder. After running the builder use the default yes option for all prompts.

sudo apt update

sudo apt -y upgrade

wget https://github.com/pritunl/pritunl-cloud-builder/releases/download/1.0.2653.32/pritunl-builder

echo "b1b925adbdb50661f1a8ac8941b17ec629fee752d7fe73c65ce5581a0651e5f1 pritunl-builder" | sha256sum -c -

chmod +x pritunl-builder

sudo ./pritunl-builderRun the command sudo pritunl-cloud default-password to get the default login password. Then open a web browser to the servers IP address and login.

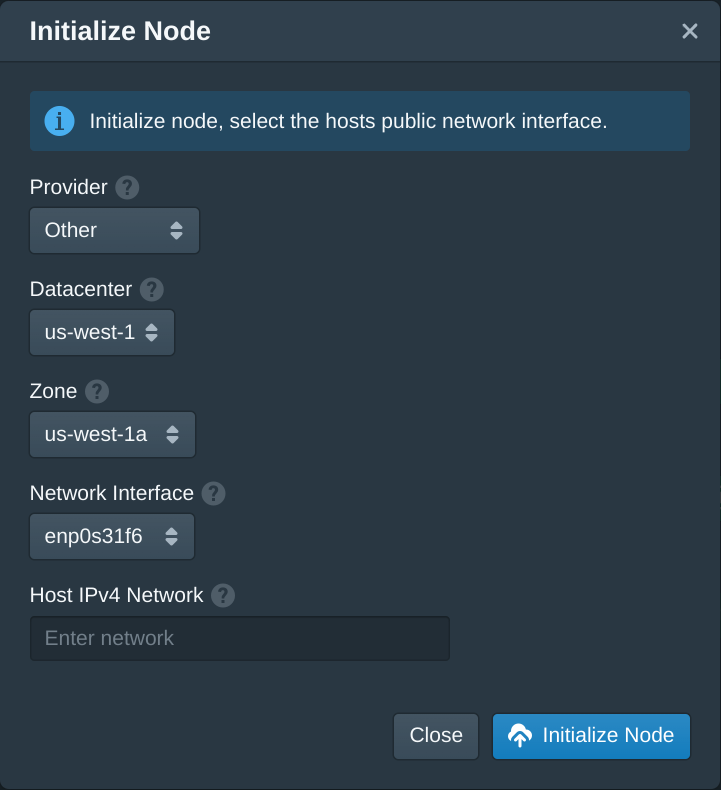

sudo pritunl-cloud default-passwordIn the Nodes tab click Initialize Node. Set the Provider to Other then select the first datacenter and zone. These can be renamed later.

If Pritunl Cloud is being run on a private network with enough IP addresses for all instances the host network can be left blank. If it is run on a server with limited public IP addresses a host network should be configured.

Pritunl Cloud instances can be configured to run without public IP addresses or in the case of running Pritunl Cloud on a private network DHCP addresses from that private network. These instances without public IP addresses will remain NATed behind the Pritunl Cloud host IP address. To accomplish this Pritunl Cloud creates an additional host-only network that provides networking between the Pritunl Cloud host and instances. Pritunl Cloud uses a networking design with high isolation which places each instance in a separate network namespace. This design requires the use of an additional network to bridge this isolation. This host network is then NATed which will allow internet traffic to travel through this network provide internet access to instances that do not have public IP address. Instances that do have public IP addresses will route internet traffic using the instance public IP. The Host IPv4 Network will specify the subnet for this network. It can be any network and the same host network can exist on multiple Pritunl Cloud hosts in a cluster. The network does not need to be larger then the maximum number of instances that the host is able to run. In this example the network 192.168.97.0/24 will be used.

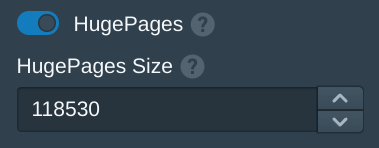

Static hugepages provide a sector of the system memory to be dedicated for hugepages. This memory will be used for instances allowing higher memory performance and preventing the host system from disturbing memory dedicated for virtual instances.

To configure hugepages run the command below to get the memory size in megabytes. This example has 128gb of memory. Take the total number from below and subtract 10000 to leave 10gb of memory for the host. The host should be left with at least 4gb of memory, the rest of the memory will be allocated to hugepages which will be used for Pritunl Cloud instances.

free -m

total used free shared buff/cache available

Mem: 128530 1433 125442 2 1654 126182

Swap: 8191 0 8191In the Pritunl Cloud web console from the Hosts tab enable HugePages and enter the memory size with 10000 subtracted to configure the hugepages. In this example 118530 will be used to allocate 118gb for instance memory. Once done click Save. The system hugepages will not update until an instance is started. If the system has issues this may need to be reduced to allocate more memory to the system.

Launch Instance

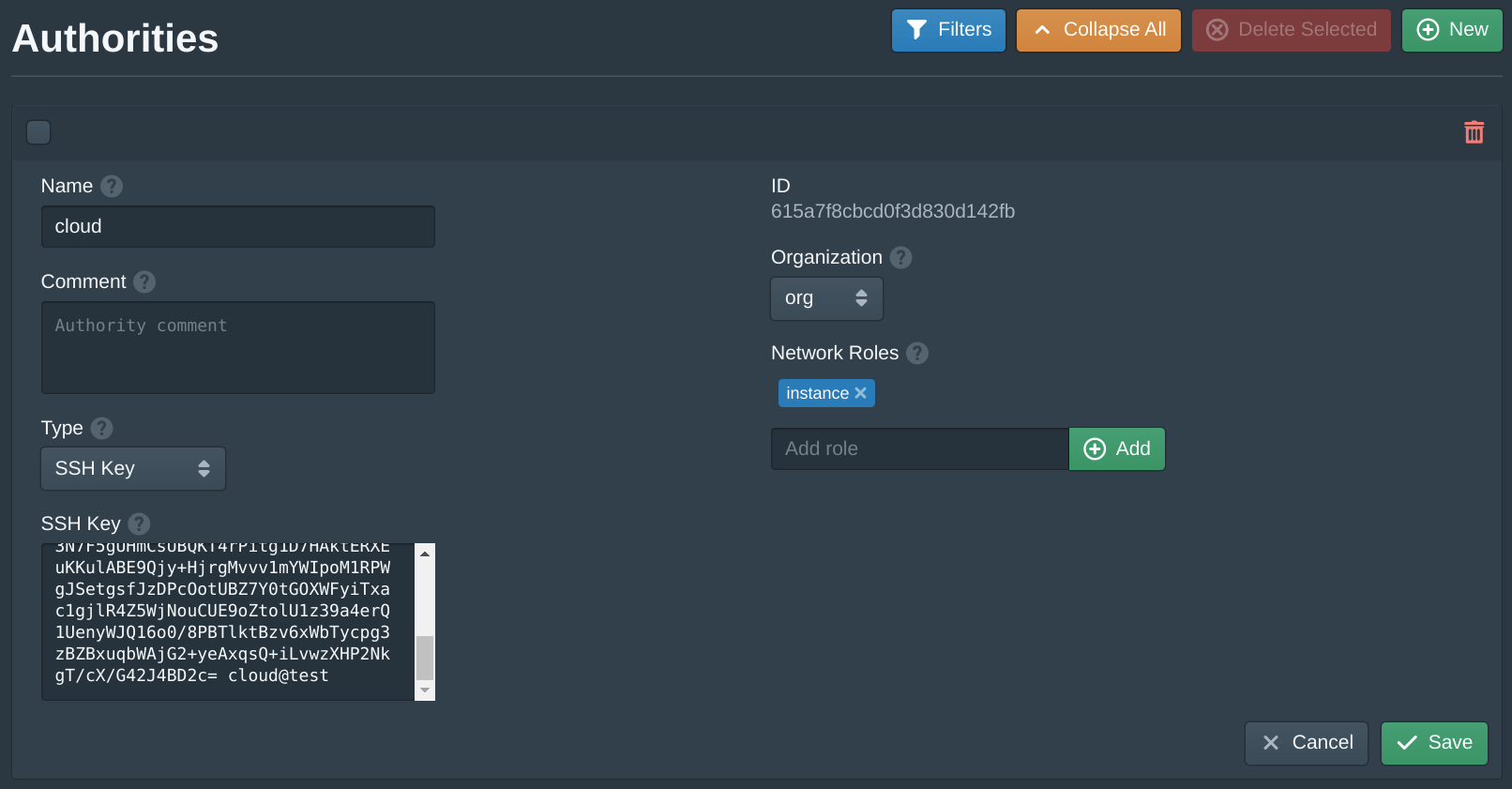

Open the Authorities tab and set the SSH Key field to your public SSH key. Then click Save.

This authority will associate an SSH key with instances that share the same instance role. Pritunl Cloud uses roles to match authorities and firewalls to instances. The organization must also match.

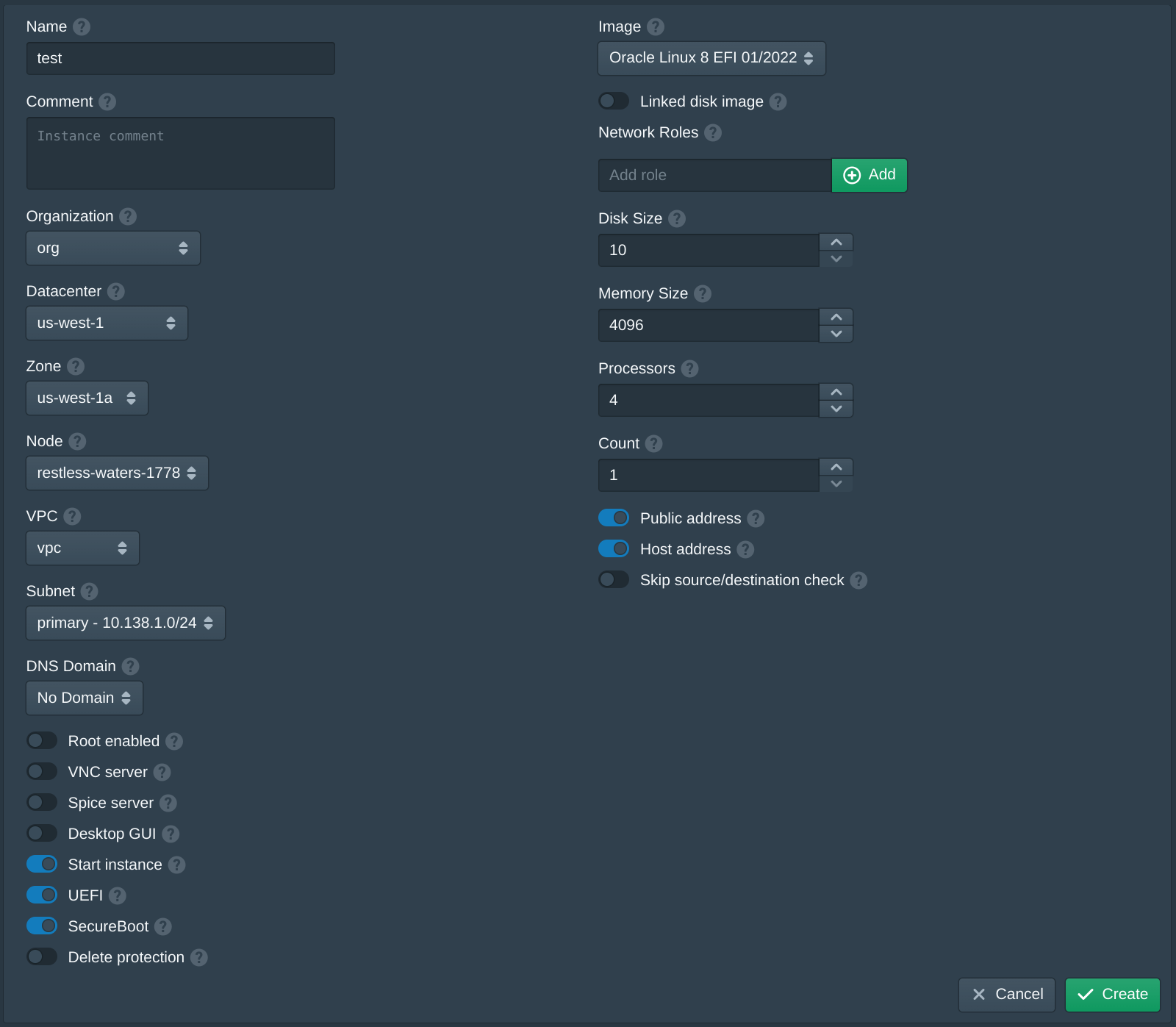

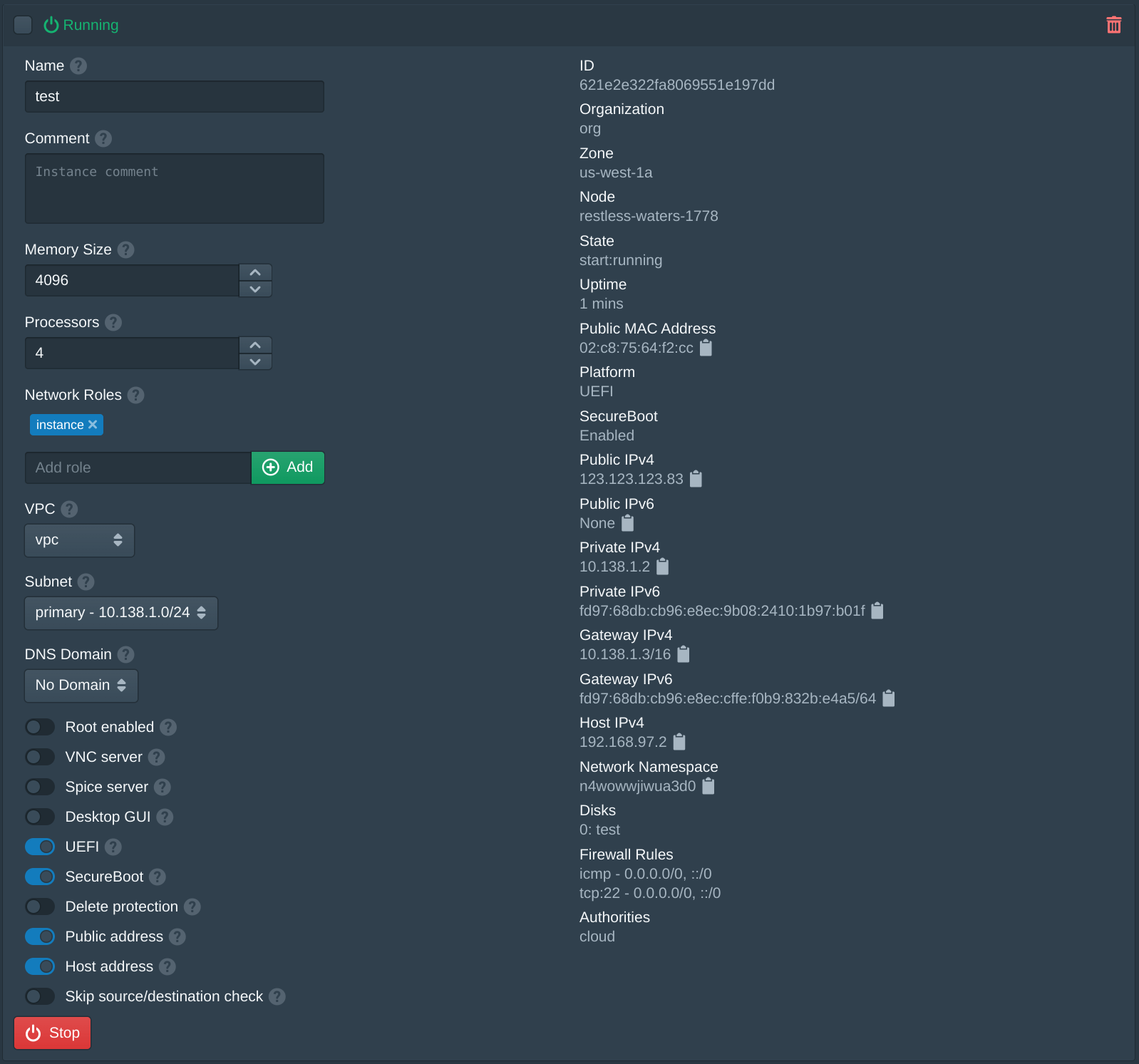

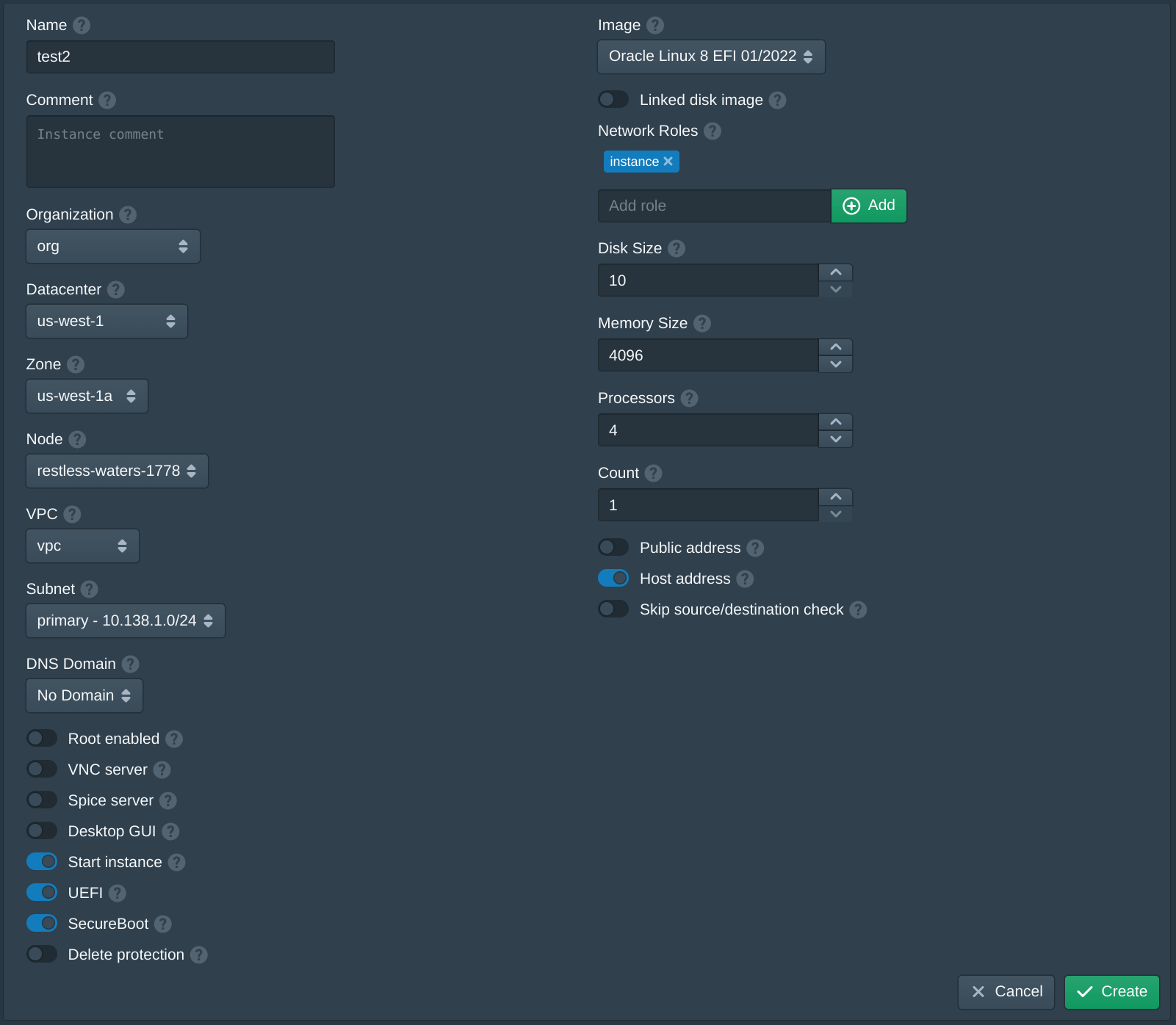

Next open the Instances tab and click New to create a new instance. Set the Name to test, the Organization to org, the Datacenter to us-west-1, the Zone to us-west-1a, the VPC to vpc, the Subnet to primary and the Node to the first available node. Then set the Image to latest version of Oracle Linux 8 EFI. Then enter instance and click Add to the Network Roles, this will associate the default firewall and the authority with the SSH key above. Then click Create. The create instance panel will remain open with the fields filled to allow quickly creating multiple instances. Once the instances are created click Cancel to close the dialog.

For this instance the Public address option will be enabled to give this instance a public IP. This will use DHCP for the instance bridged interface. If the Pritunl Cloud host is run on a private network this will be a private IP address on that network.

When creating the first instance the instance Image will be downloaded and the signature of the image will be validated. This may take a few minutes, future instances will use a cached image.

After the instance has been created the server can be accessed using the public IP address shown and the cloud username. For this example this is ssh cloud@123.123.123.83.

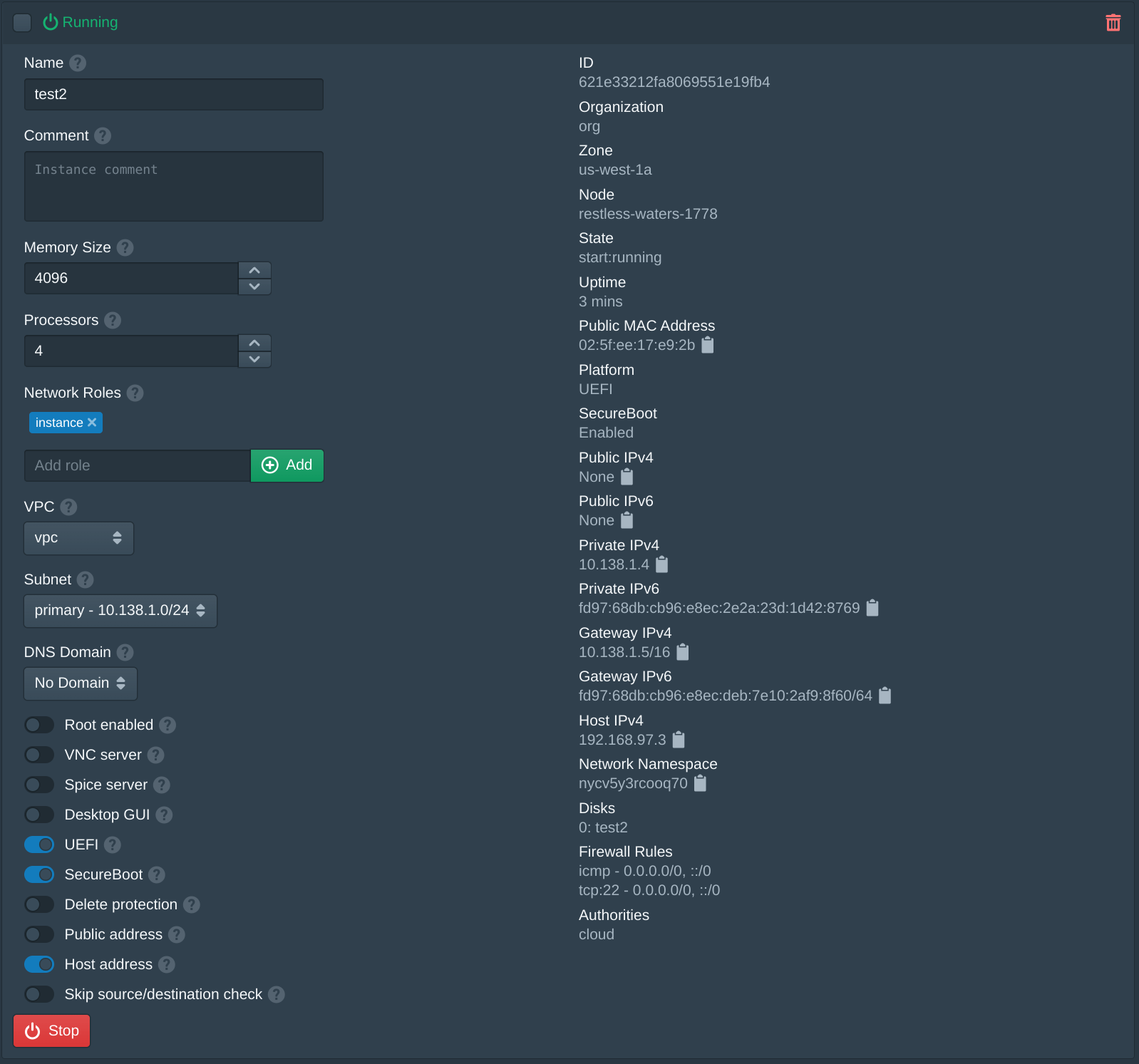

Next create an additional instance using the same options above with Public address disabled.

To access this instance an SSH jump proxy from the other instance to the instance Private IPv4 address shown below. For this example this is ssh -J cloud@123.123.123.83 cloud@10.138.1.4. This will first connect to the instance above and then connect to the internal instance using the VPC IP address. For additional network access a VPN server can be configured to route the VPC network. By default the firewall rules allow any IP address to access SSH, when configuring this the firewall will need to allow SSH access from the private IP address of the jump instance.

Optionally the public IP address of the Pritunl Cloud host can also be used as a jump server and connect to the Host IPv4 address of the instance. For this example this is ssh ubuntu@123.123.123.82 cloud@192.168.97.3.

If an instance fails to start refer to the Debugging section.

Instance Usage

The default firewall configured will only allow ssh traffic. This can be changed from the Firewalls tab. To provide public access to services running on the instances either the built in load balancer functionality can be used.

To use the Pritunl Cloud load balancer DNS records will need to be configured for the Pritunl Cloud server. When a web request is received by the Pritunl Cloud server the domain will be used to either route that request to an instance through the load balancer or provide access to the admin console. In the Nodes tab once the Load Balancer option is enabled a field for Admin Domain and User Domain will be shown. These DNS records must point to the Pritunl Cloud server IP and will provide access to the admin console. The user domain provides access to the user web console which is a more limited version of the admin console intended for non-administrator user to manage instance resources. Non-administrator users are limited to accessing resources only within the organization that they have access to.

Updated 7 months ago